You open up your favorite GenAI design tool and type something like, "Design a dashboard for a project management tool that helps teams track progress across multiple workstreams." You hit enter. In 30 seconds, you're looking at a fully-formed solution. Layout, labels, hierarchy, color. It looks like a real product. It looks like something a designer would deliver. Maybe better than a designer would deliver because it's already in code.

With all of this hyper productivity, people are tempted to ask: "Why do I need a designer when AI did it in two minutes instead of two weeks?" It's a fair question.

It seems like design just happened. But it didn't.

What actually happened was mere creation. And creating something isn't the same thing as design, even when the output looks identical. This distinction sounds subtle, but it's the difference between a solution shaped by understanding and one shaped by mathematical probability. And if you don't know the difference, you're going to miss a huge opportunity.

How the Trap Works

Here's what most people don't realize about how generative AI "thinks." But, it doesn't really think.

AI doesn't reason toward a solution the way a person does. It predicts the next most probable word, one token at a time, based on patterns in the massive amount of data it was trained on. Every "decision" it makes is a mathematical computation on what's most likely to come next, based on what it's seen before. There's no weighing of tradeoffs. No consideration of your specific users. No tension between competing ideas. Just mathematical probability, over and over, until it completes the output you asked it for.

Now follow that logic one step further. If every step in the process is a bet on what's most probable, then the final output is, by definition, the most conventional answer. The most common thought. The solution is based on everything that already exists.

Think about that for a second. You asked AI to solve a design problem, and it gives you the statistical average of every similar solution it's ever seen. It doesn't explore three wildly different directions. It doesn't sketch something deliberately terrible to find where the boundaries are. It doesn't hold two contradictory ideas in tension to see which one revealed something unexpected. It doesn't do any of those things. It's not built to diverge. It's built to converge.

That's not designing. That's least-common-denominator creation.

This gets especially dangerous when you consider what designers actually work on. The kinds of problems designers tackle aren't simple or routine. Horst Rittel and Melvin Webber called them "wicked problems," challenges so deeply complex, with so many intertwined variables, that they resist straightforward solutions. They're usually "novel". They don't have a single correct answer. They require you to feel your way through the mess of competing needs and tradeoffs. A probability engine that produces the most common thought is fundamentally mismatched with problems that demand uncommon solutions.

Now, this doesn't mean the output is bad. It's polished. It's usable. But it wasn't shaped by understanding the problem. It was shaped by the mathematical probability of the most common composition. And there's a canyon of difference between those two things.

Why the Mess Matters

So why care about the process if the output looks fine?

Because design isn't about the output. It never was. The value of design lives in the exploration and understanding that happens before the final solution takes shape. Skip that exploration, and you don't just get a less creative answer. You get a less informed one. You get a less powerful one.

Design researcher Nigel Cross has studied how designers think. One of his most important findings is that design problems can't be fully understood until they're solved. The act of exploring solutions is what reveals the true shape of the problem. You literally can't understand what you're solving for until you start trying to solve it. This is why "just gather the requirements first" never quite works for novel design challenges. The people giving you requirements can't fully articulate what they need until they see options in front of them. That's not a flaw. That's how human understanding works.

Bill Buxton made a critical distinction between sketching and prototyping that's incredibly relevant here. Sketching is about exploring. It's loose, quick, and intentionally rough. You're not committing to anything. You're learning. Prototyping is about refining. Both are about understanding.

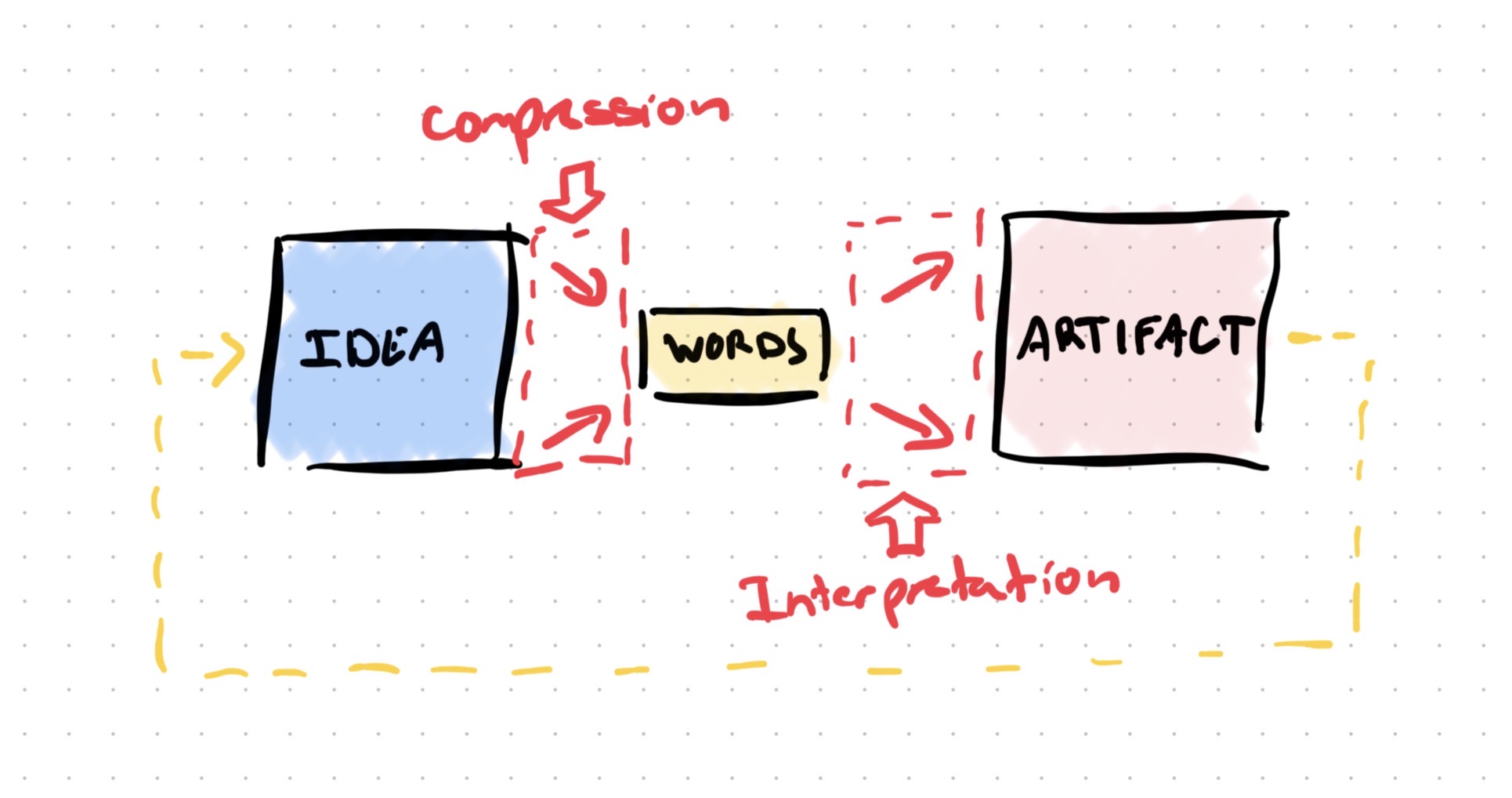

AI jumps straight to prototype-level output from words. The problem with that? Ideas are rich, contextual, full of nuance. Words are a compressed, lossy format. When someone says "make it intuitive," there's a complex idea behind that request, shaped by their experience, their frustrations, their context. But all of that gets squeezed down into three words. It's like compressing a high-res image into a tiny JPEG. The file gets smaller, but the detail disappears.

AI takes that compressed input and tries to reconstruct an idea from it. It produces something that looks plausible, but the nuance got lost in translation. It's a one-way trip from compressed words to finished output. Sure, it may ask some followup questions, but it's virtually impossible to fully transcribe conceptual ideas into words.

A designer does something different. They take those same lossy words and sketch three options. "Did you mean this? Or this? Or this?" Each sketch is a translation check, a way to decompress the idea back into something people can react to. That moment when someone says "not that, but close" is where the real learning happens. It's a round-trip translation. Words go out, options come back, understanding gets built in the loop. AI never creates that loop because it never offers the range of options needed to spark it.

The cost of skipping all this? You get a plausible output, not a novel solution.

Making This Visible

Imagine your VP asks "what if we approached this differently?" The designer who explored multiple options can pivot. They've already considered alternatives. They've got a mental map of the territory, what works, what doesn't, and why. The exploration gave them that.

The person who used AI to generate the answer has nothing to fall back on. Nobody explored why it was the right answer, so nobody can explain why it should or shouldn't change. They didn't build the understanding. They just accepted the output.

That's the tricky part about this probability trap. The AI-generated output and the designed output can look identical on the surface. You can't tell the difference by looking at a screenshot. The difference only becomes visible when it matters. And eventually, it will matter.

This isn't about AI being bad. AI is incredibly impressive at what it does. But what it does is produce a statistically average answer to a surface-level reading of the problem. That's a powerful capability, if you wield it the right way.

The question isn't "should we use AI?" Of course you should. The question is "do we understand how AI works, and how it can be used positively?" Because right now, a lot of teams aren't. They're wowed by the speed. Impressed by the polish. And moving on before anyone asks whether the solution is actually right. The probability trap isn't about AI failing. It's about us forgetting what design is supposed to do in the first place.

Using AI Like a Designer

So how do you actually use AI without falling into the probability trap? You use it the way you'd use any powerful tool: with intention, at the right time, and in service of a process that's bigger than the tool itself.

- Generate a baseline, then react against it. Let AI take its best shot first. Now you know what the most conventional solution looks like. That's useful information. Look at it critically. What assumptions did it make? What's missing? Where did it play it safe? Use the AI output as a foil, not a finish line. It shows you the average, and your job is to decide if the average is good enough or if the problem demands something better.

- Accelerate the exploration. AI can absolutely be part of your design process from the start. The key is that you're driving, not riding. Sketch. Run a Crazy 8s session. Map out flows on a whiteboard. Argue with your PM (or AI) about the tradeoffs. Sit with contradictions long enough to learn from them. Use AI to generate variations, challenge your assumptions, or fill in blind spots. But make sure the messy work of understanding the problem through multiple directions is happening, not getting skipped. AI is an incredible accelerator. Don't hand it the wheel.

- Force AI to diverge. This is my favorite one. If you're going to use AI during exploration, make it work like a brainstorm participant, not a decision-maker. Tell it to generate five wildly different approaches, including intentionally bad ones. You're not looking for THE answer. You're looking for reactions, comparisons, and learning. The bad options might reveal a boundary you hadn't considered. The weird option might spark a direction you wouldn't have tried. You're still the one evaluating. You're still the one deciding what's worth pursuing and what's revealing about the problem. AI accelerates your exploration.

- Use AI to stress-test, not solve. After you've done some design work, hand your solution to AI and ask it to break it. What edge cases did you miss? What user scenarios could go sideways? What accessibility gaps exist? Let AI be the critic, not the creator. It can be quite good at poking holes when you give it something specific to evaluate. (There's lots of negativity on the interwebs it was trained on.)

- Compare your direction to AI's. Here's a fun test. After you've done your exploration, sketched your options, and landed on a direction, ask AI to solve the same problem from scratch. Don't give it your work. Just give it the original brief. If your solution looks nothing like what AI produced, that's a good sign. You've probably found something that probability alone wouldn't have surfaced. If your solution and AI's are nearly identical, ask yourself whether you explored far enough.

And if you want a head start, here's a snippet I use to personalize how an LLM collaborates with me. Teaching AI how you think is the first step toward using it like a designer.

The Value Was Never the Deliverable

AI creates incredibly fast. Faster than most of us can create. It's useful. Don't dismiss it. The ability to go from direction to polished output in minutes instead of hours is incredible, and it's only going to get better. I'm excited about what AI brings to the table.

But production was never the hard part of design. The hard part is the exploration, the contradiction, the mess that builds understanding. It's holding three bad options next to two good ones and figuring out what makes the good ones good. It's sketching something terrible and learning where the boundaries of the problem actually live. It's the human, squishy, nonlinear work of making sense of ambiguity.

AI is architecturally built to skip that mess. That's the nature of a probability engine. Probability doesn't do "messy". That's not a flaw. It's just what it is. And once you understand that, you can use it with clear eyes instead of false expectations.

So use AI to build faster. Use it to stress-test your thinking. Use it to explore beyond your own blind spots. Because the moment you stop exploring, you stop understanding. And AI gives you no excuse to stop. It gives you every reason to go further.